history of the entire AI field, i guess [Video]

Video uploaded by bycloud on October 13, 2022.

History of Artificial Intelligence Research From the Beginning to the End

“At the end of the century, the usage of words and general public opinion will have changed so much that one will be able to speak of thinking machines without expecting to be contradicted.” (Alan Turing).

The field of artificial intelligence research is filled with detours and dead ends, grandiose unfinished projects, and overlooked paths that may prove crucial in the future. In these pages, I can only provide a brief and undoubtedly incomplete summary.

The very first research in the field of thinking machines was inspired by a convergence of ideas that gradually spread from the late 1930s to the early 1950s. First, neurological research showed the importance of neural networks in the brain. Then, Norbert Wiener’s cybernetics, describing controls and stability in electrical networks, and Claude Shannon’s information theory, detailing digital signals, suggested that it was perfectly possible to build an artificial brain. Alan Turing finally established that these forms of computation could be represented numerically.

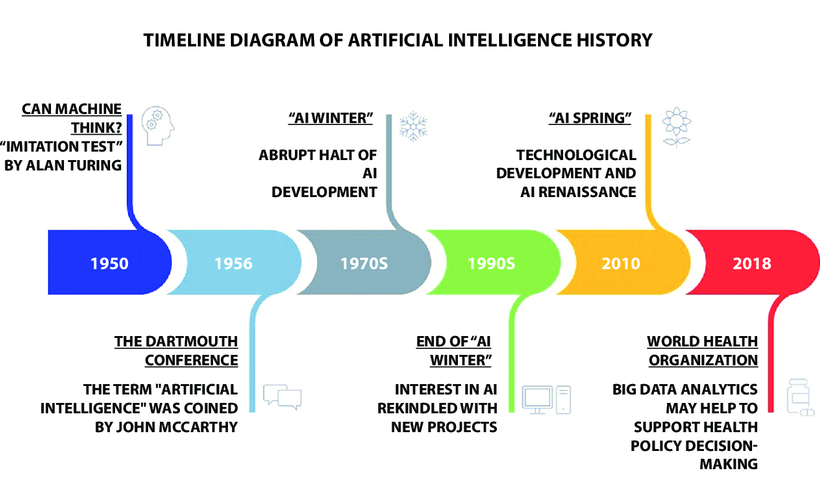

Timeline diagram of artificial intelligence history.

After the development of the first computers during the 1950s and 1960s, AI research slowed down, as the difficulties of the problem began to be understood… a problem that science fiction authors had simply ignored while highlighting impossible potentialities with 20th-century technology. Moreover, thinking machines in fiction had a bad reputation in popular culture, with electronic brains regularly attacking humans: such as the mechanical adversaries of Doctor Who, Colossus of The Forbin Project, HAL 9000 from 2001: A Space Odyssey, and the various supercomputers of Star Trek. (HAL 9000 would even become the 13th greatest villain in American film history, according to the American Film Institute.)

During the 1980s, AI research picked up again with the rise of expert systems. (My short story “Ad Majorem Dei Gloriam” features expert systems; giving you an idea of its conception date.) These are programs that answer questions or solve problems in a specific knowledge domain, using logical rules derived from the knowledge of human experts in that domain. Expert systems deliberately limit themselves to a small specific knowledge domain – thus avoiding the problem of instilling general culture in a computer – and their simple design allows these software to be built relatively easily and improved once deployed. Finally, these programs prove useful, as it’s the first time artificial intelligence finds a practical commercial application in companies. Alongside these limited algorithms, attempts are made to develop an artificial intelligence capable of general culture through a gigantic database, created with the aim of containing all the trivial facts that an average person knows. This will be the Cyc project, whose name is derived from en-cyc-lopedia, intended to span decades, still active today, and without which, it is thought, it is impossible to create strong AI.

At the end of the 1980s, several researchers argued for a completely new approach to artificial intelligence, centered on robotics.

At the end of the 1980s, several researchers argued for a completely new approach to artificial intelligence, centered on robotics. Indeed, they believed that to demonstrate true intelligence, a machine must be aware of its body; it must perceive, move, survive, and evolve in the world. They explain that these sensorimotor capabilities are essential to higher-level capacities such as general culture reasoning, and that abstract reasoning is in fact the least interesting or important human capacity. Thus, several generations of robots were developed, based on human architecture. (Note that Frank Herbert, in Destination: Void, had intuited this twenty years earlier in one of the rare novels interested in the design of an AI endowed with consciousness. Corporeality may be necessary for the birth of the mind. The body-mind dichotomy is also a classic problem in philosophy.)

During the following decade, a new paradigm, based on intelligent agents, emerged. An intelligent agent consists of an autonomous entity capable of perceiving its environment through sensors and also of acting on it via effectors to achieve objectives, like a simple thermostat, for example, or a personal assistant like Siri.

The goal, which remains still distant, of setting up thinking artifacts has led some researchers to develop augmented intelligence rather than artificial intelligence; for example, by making available to the nervous system and the human brain implants, such as sensors and information search and calculation devices that computers are equipped with.

Links

- The History of Artificial Intelligence – Harvard University

- History of artificial intelligence – Wikipedia

- Sell your AI gadget online – iGotOffer

Facebook

Twitter

RSS