Apple Changes the Rules for Minors: Applies to iPhone, iPad, and Mac

Users who do not meet the minimum age required for an app will not be able to download it from the App Store. Apple has launched a global age verification system in its App Store, a direct response to stricter international regulations aimed at protecting children and teenagers in digital environments.

With this measure, Apple not only strengthens its regulatory compliance but also sets a benchmark for other tech platforms operating in markets where child protection is a legal priority.

Apple not only strengthens its regulatory compliance but also sets a benchmark for other tech platforms operating in markets where child protection is a legal priority.

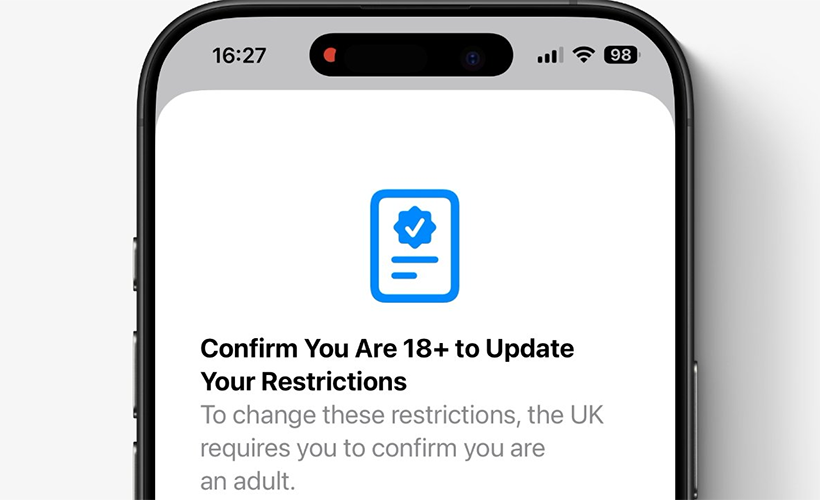

Age verification on Apple: how the new controls for minors work

The rollout of age verification tools across Apple’s ecosystem is not an isolated move — it’s part of a broader strategy to adapt to regulatory frameworks emerging in major international markets.

Europe has led this shift with laws such as the Digital Services Act (DSA) and the UK Age Appropriate Design Code, which require the implementation of age checks and the design of services that prioritize minors’ well-being. Penalties for non-compliance can include multi-million-dollar fines or even app bans in key regions.

Apple has introduced concrete technical mechanisms to meet these requirements while preserving the user experience. The key measures include:

- Preventive download blocking: Users who do not meet an app’s minimum age requirement will be unable to download it from the App Store.

- Verification through Apple ID: When creating an account, the user’s age is recorded and used to apply automatic restrictions.

- Enhanced parental controls: Tools such as Screen Time and Family Sharing have been strengthened, giving parents more control over their children’s access to different content.

- Detailed content classification: Apple is working with developers to ensure content declarations and target audience labels are accurate and aligned with the new regulations.

These verifications operate at the operating system level, sparing individual apps from needing their own control systems to meet Apple’s basic requirements. However, in some markets, local laws may demand additional validation.

The rollout of age verification tools across Apple’s ecosystem is not an isolated move — it’s part of a broader strategy to adapt to regulatory frameworks emerging in major international markets.

Impact on startups and developers

Apple’s introduction of global age verification has immediate consequences for founders and mobile app development teams.

Accurate content classification in App Store Connect is now crucial; errors in labeling can lead to update rejections, removal from the store, or even legal action. It is essential for developers to carefully review and respond to the age rating questionnaires.

Audience segmentation also becomes a challenge for apps targeting both minors and adults. Some startups have opted to create separate versions of their apps—one for minors and one for adults—in order to comply with the new rules while maintaining market reach.

Social networks accept independent audit to measure their impact on mental health

Major social media platforms have agreed to undergo external audits to assess their impact on teenagers’ mental health. This review, conducted by independent experts, comes amid growing concern and social, political, and legal pressure around youth well-being online.

Meta (parent company of Facebook and Instagram), along with TikTok and Snap, have committed to collaborating in this process, allowing specialists to thoroughly review their policies and safety tools for teenage users.

The evaluation will use a set of roughly 24 standards developed by mental health experts to assess how effective the platforms’ safety measures are.

Criteria to be analyzed include tools that limit screen time, options to disable infinite scrolling, and systems that prompt users to take periodic breaks.

Policies regarding exposure to sensitive content—such as material related to suicide or self-harm—will also be reviewed, as well as transparency practices and educational actions on digital risks.

Platforms that meet the standards will receive a distinctive blue shield certification, while those that fail will be flagged for their inability to prevent harmful content exposure.

Links

- Use parental controls to manage your child’s iPhone or iPad – Apple

- Sell pre-owned Apple product online – iGotOffer

- Everything About Apple’s Products – The complete guide to all Apple consumer electronic products, including technical specifications, identifiers and other valuable information.

Every iOS Parental Control You NEED to Know on Apple (iPhone, iPad, Mac) [Video]

Video uploaded by The TechVengers (Jay Ai) on January 18, 2026.

Facebook

Twitter

RSS